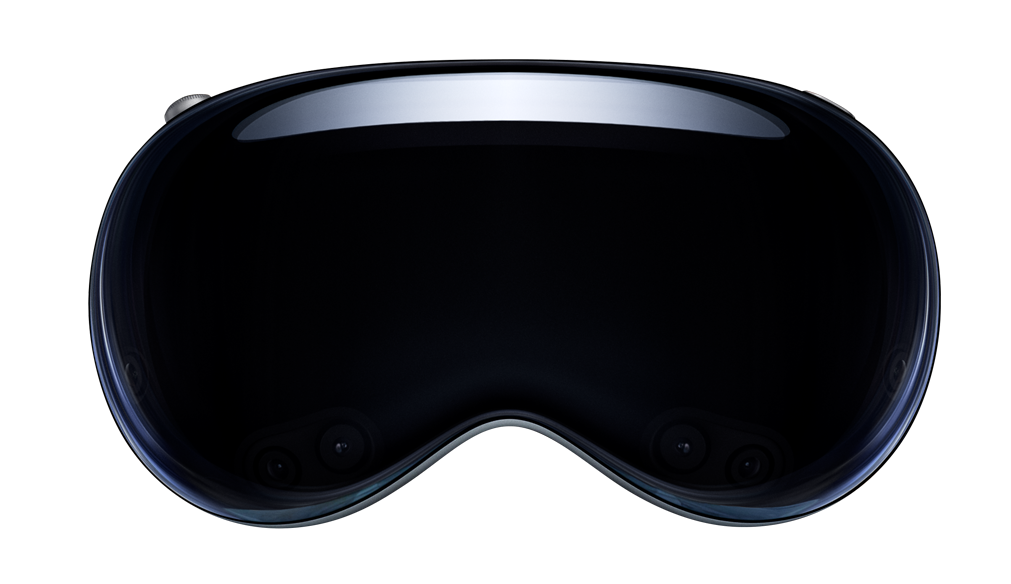

Developing with Vision Pro

Developing with visionOS

New exciting realities await you when developing apps for Vision Pro.

Apple provides visionOS development starting with Xcode 15.2 or higher.

Note

You will need an Apple developer account to download developer tools and SDKs.

Create a visionOS project

You can develop with a physical Vision Pro plugged in or using the Vision Pro Simulator.

You will need at least the 8.7+ NativeScript CLI:

npm install -g nativescript@latestYou can now use the --vision (or --visionos) flags when creating your app.

ns create myapp --visionThis will setup a preconfigured visionOS ready app using a plain TypeScript base.

If you prefer a flavor, you can use any of the following:

- Angular:

ns create myapp --vision-ng - React:

ns create myapp --vision-react - Solid:

ns create myapp --vision-solid - Svelte:

ns create myapp --vision-svelte - Vue (3.x):

ns create myapp --vision-vue

All projects are preconfigured with tailwindcss.

Run your visionOS project

Open the Vision Pro Simulator, then run your app with:

ns run vision --no-hmrThe vision platform target is a shorthand alias for visionos so this can also be used:

ns run visionos --no-hmrDevelop with physical Vision Pro

You can use a Developer Strap to connect your Vision Pro to your Mac.

The Developer Strap is an optional accessory that provides a USB-C connection between Apple Vision Pro and Mac and is helpful for accelerating the development of graphics-intensive apps and games. The Developer Strap provides the same audio experience as the in-box Right Audio Strap, so developers can keep the Developer Strap attached for both development and testing.

Once connected, you can run ns device to list all connected physical devices:

% ns device

Searching for devices...

Connected devices & emulators

┌───┬──────────────────┬──────────┬───────────────────────────┬────────┬───────────┬─────────────────┐

│ # │ Device Name │ Platform │ Device Identifier │ Type │ Status │ Connection Type │

│ 1 │ Apple Vision Pro │ visionOS │ 00008112-001A10812278A01E │ Device │ Connected │ USB │You can then run on that device as follows:

ns run visionos --no-hmr --device=00008112-001A10812278A01EWhat makes a project work on visionOS?

Primarily 2 key elements make up a NativeScript driven visionOS project:

App_Resources/visionOS/src/NativeScriptApp.swift- The following dependencies:

{

"dependencies": {

"@nativescript/core": "~8.8.0"

},

"devDependencies": {

"@nativescript/visionos": "~8.8.0",

"@nativescript/webpack": "~5.0.0"

}

}Design Guidelines and Notes

We strongly encourage developers to understand and use Apple's system glass materials throughout their apps in addition to closely following their design guidelines.

We recommend watching the following WWDC 2023 videos covering visionOS for fundamental understandings:

- Design for spatial user interfaces

- Design considerations for vision and motion

- Meet UIKit for spatial computing

- Create accessible spatial experiences

- Explore immersive sound design

- Deliver video content for spatial experiences

- Create a great spatial playback experience

You may by interested in more here.

CSS Adjustments for visionOS

You will likely want to make your Pages transparent to allow the natural glass materials to come through by using this CSS specifier:

.ns-visionos Page {

background-color: transparent;

}When running your app on visionOS, you can scope CSS selectors where needed by the root level .ns-visionos class.

Hover effect for visionOS materials

All standard/system UI Component usages like Button, Switch, Pickers, etc. will automatically get system hover style effects on visionOS.

It's common to add tap bindings in NativeScript to things like StackLayout, GridLayout, etc. which are just UIView's.

You can use new @nativescript/core APIs to easily enable visionOS hover styles on any view type throughout your app or customize per view.

Apple discusses some of the important spatial considerations with these effects in this session.

Each view can specify it's own custom visionHoverStyle as follows:

<GridLayout visionHoverStyle="{{visionHoverStyle}}" tap="{{tapAction}}"/>The visionHoverStyle property can be defined as a string or VisionHoverOptions.

import { VisionHoverOptions } from '@nativescript/core'

const visionHoverStyle: VisionHoverOptions = {

effect: 'highlight',

shape: 'rect',

shapeCornerRadius: 16,

}This would apply a visionOS system highlight rectangle with a cornerRadius of 16 to that GridLayout when a hover is detected.

The options are as follows:

export type VisionHoverEffect = 'automatic' | 'highlight' | 'lift'

export type VisionHoverShape = 'circle' | 'rect'

export type VisionHoverOptions = {

effect: VisionHoverEffect

shape?: VisionHoverShape

shapeCornerRadius?: number

}When a string is provided, it will look for predefined visionHoverStyle's within the TouchManager.visionHoverOptions that match the string name. This allows you to predefine and share custom visionHoverStyle's across your entire app.

You can enable these effects globally throughout your app for any view which has a tap binding by enabling:

TouchManager.enableGlobalHoverWhereTap = trueThis allows you to predefine any number of custom visionHoverStyle's you'd like to use throughout your app. You could do so in the app.ts or main.ts (aka, bootstrap file), for example:

TouchManager.enableGlobalHoverWhereTap = true

TouchManager.visionHoverOptions = {

default: {

effect: 'highlight',

shape: 'rect',

shapeCornerRadius: 16,

},

slimBox: {

effect: 'lift',

shape: 'rect',

shapeCornerRadius: 8,

},

round: {

effect: 'lift',

shape: 'circle',

},

}You could then apply custom visionHoverStyle's by their name anywhere in your app:

<GridLayout visionHoverStyle="default" tap="tapAction"/>

<GridLayout visionHoverStyle="slimBox" tap="tapAction"/>

<GridLayout visionHoverStyle="round" tap="tapAction"/>You can also disable a hoverStyle on any view by adding the visionIgnoreHoverStyle property if desired.

Note

When no visionHoverStyle is defined and not using TouchManager.enableGlobalHoverWhereTap, visionOS will use default behavior by enabling hoverStyle's on standard controls as mentioned. Other views would have no hoverStyle as expected.

View template visionOS scoping

You can also scope sections of your view templates specifically for visionOS layouts as needed:

<visionos>

<Label>I only show on visionOS</Label>

</visionos>

<ios>

<Label>I only show on iOS</Label>

</ios>

<android>

<Label>I only show on Android</Label>

</android>Note

You should not have to do a lot of this throughout apps in general but these options are available to you where desired.

NativeScript and the SwiftUI App Lifecycle

Starting with NativeScript 8.6 we support the SwiftUI App Lifecycle for the first time. For a better understanding of the SwiftUI App Lifecycle, we recommend the following articles:

- https://peterfriese.dev/posts/ultimate-guide-to-swiftui2-application-lifecycle/

- https://dev.to/sam_programiz/swiftui-app-life-cycle-2n68

how can we tell the compiler about the entry point to our application?

Historically with NativeScript apps, we would use the Objective C main entry to define the entry point where the NativeScript engine was intialized and your app would be booted.

We now also support a SwiftUI @main entry via a single App_Resources/visionOS/src/NativeScriptApp.swift file:

import SwiftUI

@main

struct NativeScriptApp: App {

var body: some Scene {

NativeScriptMainWindow()

}

}The NativeScriptMainWindow is a SwiftUI WindowGroup which returns a Scene, your NativeScript app. In visionOS apps, you can expand this struct to support new Scenes and Spaces with new and exciting window styles like volumetric as well as Immersive Spaces.

NativeScriptMainWindow is a SwiftUI struct representing a Scene itself which looks like this:

struct NativeScriptMainWindow: Scene {

var body: some Scene {

WindowGroup {

NativeScriptAppView(found: { windowScene in

NativeScriptEmbedder.sharedInstance().setWindowScene(windowScene)

}).onAppear {

// Your app is booted here!

DispatchQueue.main.async {

NativeScriptStart.boot()

}

}

}

.windowStyle(.plain)

}

init() {

// NativeScript engine is configured here!

NativeScriptEmbedder.sharedInstance().setDelegate(NativeScriptViewRegistry.shared)

NativeScriptStart.setup()

}

}Note

This is enabled for visionOS only right now with NativeScript however this will be used in iOS and macOS apps in the future.

Support multiple windows

In order to add volumetric and immersize spaces, be sure you add the following setting to your App_Resources/visionOS/Info.plist:

<key>UIApplicationSceneManifest</key>

<dict>

<key>UIApplicationSupportsMultipleScenes</key>

<true/>

</dict>Vision Pro Tutorials in all Flavors

You can follow along in these "Vision Pro 🥽 Hello World" tutorials:

- Develop Vision Pro 🥽 apps with TypeScript

- Develop Vision Pro 🥽 apps with Angular

- Develop Vision Pro 🥽 apps with React

- Develop Vision Pro 🥽 apps with Solid

- Develop Vision Pro 🥽 apps with Svelte

- Develop Vision Pro 🥽 apps with Vue

Vision Pro for Angular Developers

This tutorial was hosted by This Dot Media

Blog Posts

Particle Systems via RealityKit and Multiple Scenes during Vision Pro development with NativeScript

https://blog.nativescript.org/particles-and-multiple-scenes-vision-pro-development/

How to add visionOS to an existing app?

https://blog.nativescript.org/add-visionos-to-existing-nativescript-app/

- Previous

- Using Sentry